As I’m working on a cluster of ideas about robots, AI, automata and animals, here is an entry on Robot that I wrote for The International Encyclopedia of Communication Theory and Philosophy (2015).

The word “robot” was coined by the Czech playwright Karel Čapek in 1921, in his play R.U.R. He took his inspiration for it from the Czech word for “forced labor,” and the play presented what has become a familiar dramatic vision: artificial humanlike entities designed for precise or mundane work, but that ultimately threaten domination over their human creators. A technological imaginary of self-moving and autonomous artificial entities, usually humanlike, can be traced back through folktales and myth, from the medieval Jewish Golem back to the automatons created in Greek myth by the god Hephaestus and in the real world by the Greek 1st-century mathematician and inventor Hero of Alexandria (fl. 62 ce)—which include fabulous metal animals and self-driving vehicles. The Japanese fascination with technologies of humanlike automatons was evident from the popularity of karakuri—mechanical dolls and puppets—as early as the 17th century.

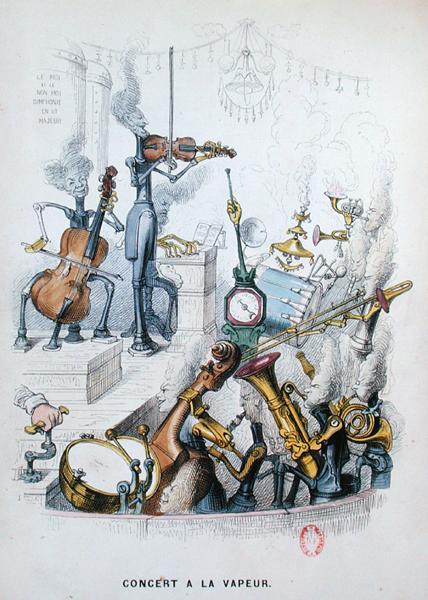

The 18th century saw the development of sophisticated new technologies for self-moving machines. These devices had industrial applications, most notably in the new textile factories with the Jacquard loom. The programming of automated looms through punch cards appears to have influenced the programming techniques that underpinned Charles Babbage’s design for a mechanical computer, the Analytical Engine in the 1830s. The same principles and techniques also animated the spectacular humanoid automatons that were displayed at courts across Europe. The creations of Jacques de Vaucanson (who had been instrumental in the development of the Jacquard loom), notably his mechanical duck that could eat and defecate, and Pierre Jacquet-Droz’s musicians and draughtsmen were presented for entertainment and wonder, bound up in philosophical reflections on the nature of consciousness, reason, and free will. One of Jacquet-Droz’s creations, a doll of a small boy, could be programmed by a set of cams to write any text up to 40 letters in length.

This bifurcation in the genealogy of robots between entertainment and industry raises a taxonomical issue that persists today. Popular images of robots tend to be, first and foremost, human-shaped—that is, “androids,” like the 18th-century automatons—either in their physical form or in terms of their simulation of human consciousness and intelligence. The Jacquard loom’s industrial and nonhumanoid descendants, however—the numerous and generally unremarked automated systems and factories of the twentieth century—tend not to elicit philosophical attention or reflection. The robot arms of car production lines perhaps fall somewhere between the two, automating human effort and labor, as they do, through the simulation only of a human limb. Even among roboticists, there is no hard-and-fast definition of “robot”; there is, however, a set of characteristics or abilities that are often regarded as significant in distinguishing robots from other complex machines. These include sensing abilities, actuation, intelligence, and degrees of autonomy.

According to Winfield, a robot is:

- an artificial device that can sense its environment and purposefully act on or in that environment;

- an embodied artificial intelligence; or

- a machine that can autonomously carry out useful work. (Winfield, 2012, p. 8)

]fo[Not all of these characteristics have to be present in any particular machine for it to be called a robot. The popular image of a robot is that of a fully autonomous, artificially intelligent entity, whereas many experimental, industrial, and domestic robots function through a mix of autonomous behaviors and remote control by a human operator. Many actual world robots are tele-operated, for example those designed for underwater exploration, whereas others are considered “semi-autonomous.” A notable example of semi-autonomous robots is that of “drones”: military unmanned aerial vehicles (UAVs) that fly themselves to a preset position but then are controlled by human operators for surveillance or bombing missions.

Winfield notes that, for all the excitement about robots as new humans, actual experimental and instrumental robots are not humanoid at all, rather they are “in almost every respect very crude simulacra of animals” (Winfield, 2012, p. 5), and “no roboticist would claim their robots to be any more than a simulation of some limited aspects of life or intelligence” (Winfield, 2012, p. 8). Or, as exemplified by UAVs, they might simply be semi-autonomous versions of existing types of machines and vehicles. This is primarily for practical reasons: the upright bipedal locomotion of humans is extremely difficult to engineer and is generally not necessary—wheels, tracks, or multiple legs cope with varied terrain more efficiently. Industrial robots are designed to operate in specific environments and to conduct a narrow range of activities, and they do not need the flexibility and multifunctional redundancy of the human body.

Some roboticists distinguish between humanoid robots, which might be highly stylized simulations of aspects of the human body (or of parts of it)—and androids, which are specifically designed to appear convincingly human. Androids with humanlike expressions and eye movements, for example, are generally experimental, but the longer term possibilities are clear: machines that can interact with humans in a useful, reassuring, perhaps therapeutic manner. The potential of humanoid robots lies in accomplishing tasks that necessitate working alongside, and perhaps learning from, humans. In such contexts—in an environment and set of behaviors shared with actual humans—some aspects of the human body’s scale, movement, and capabilities may well be necessary.

Lucy Suchman’s anthropology of human–machine interactions establishes a critical interrogation of the explicit and assumed models and concepts of the human—or “humanlike”—in artificial intelligence (AI) and robotics research. Taking “celebrity robots” such as Cog (developed by Rodney Brooks at MIT) and Kismet (developed by AI researcher Cynthia Breazeal), she sees in this research an explicit exploration of the boundaries between humans and nonhumans. These experimental robots are on the border between the humanoid and the android—Kismet is an anthropomorphic and expressive head, Cog a head and a torso with moving arms and hands; they explore embodiment, but also sociality. Kismet in particular is designed to interact face to face with humans, in a physically expressive materialization of software conversational agents or chatbots. Both, Suchman explains, are born of “the new AI, a turn away from intelligence figured as symbolic information processing, to humanness as embodiment, affect and interactivity” (Suchman, 2007, p. 229).

By the early 19th century the craze for humanoid automatons had waned and they disappeared into the fairground and sideshows (Lister, Dovey, Giddings, Grant, & Kelly, 2009). Robots engaged the popular imagination again in the early years of the 20th century. One of the most famous cinematic robots, Maria in Fritz Lang’s Metropolis (1927), vividly figured the industrial and mechanical servitude of the workers in the film’s dystopian future. In science-fiction literature probably the most influential robots are those in the work of Isaac Asimov (e.g., Asimov, 1993). From the 1940s on, Asimov imagined a world with fully intelligent robot servants and companions, rendered productive and safe through his three laws of robotics. For example, in the short story “Doctor Susan Calvin—Robot-Psychologist,” the laws are set out thus:

]dis[

The Three Laws of Robotics:

]nl[

- A robot must not harm a human. And it must not allow a human to be harmed.

- A robot must obey a human’s orders, unless that order conflicts with the First Law.

- A robot must protect itself, unless this protection conflicts with the First or Second Laws.

Handbook of Robotics, ad 2058 (Asimov, 2013, p. 9)

]/dis[

]fo[The exploration of the boundaries of these laws drive the narrative drama of the books—and of the recent film based on them, the popular I, Robot (2004)—as well as addressing issues of free will, determinism, and the nature and limits of human agency.

Subsequent depictions of robots in film and literature tend to fall into one or more of four main categories: the dangerous or killer robot; the robot as a deceptive simulacrum; the comical robot; or the robot as servant or slave. Maria was both dangerous and dissimulating; the future robots of the Terminator film series are either killers or protectors; the “droids” in the Star Wars films are both servants and comedic figures, C3PO explicitly referencing a bumbling English butler. Like their automated forebears and experimental cousins, robots and AI in popular media have often explored the boundaries between the human and the nonhuman; fictional robots often developing humanlike consciousness, emotions, or conscience. From the Japanese character Astro Boy (in Japan, the Mighty Atom), which was popular in both manga and anime from the early 1950s on, to HAL in 2001: A Space Odyssey in 1968, Robocop and Blade Runner’s replicants in the 1980s, and Spielberg’s AI: Artificial Intelligence in 2001, artificial humanlike entities in films have questioned their own being and possibilities. The embodied, material boundaries between the human and the robot as well as between the moral and the cognitive are further blurred in the figure of the cyborg. Robocop is an example of this: most of his body is replaced by robotic components, and his behavior is limited by Asimov-like laws.

A persistent anxiety in popular fiction and film is the assumption that any emergent self-awareness in robots and in AI creations will ultimately result in their domination over the human race. This is the premise in the Terminator series of films and in many stories of the comic 2000 ad, and it is parodied in the song “The Humans are Dead,” the Flight of the Conchords’ pastiche of Daft Punk’s techno-aesthetic. Some roboticists and AI researchers make analogous predictions about the imminent physical and cognitive superseding of the human race by its creations. For example, Hans Moravec predicts that robots and AI, “with human perceptual and motor abilities and superior reasoning powers, could replace human beings in every essential task” by the mid-21st century (Moravec, 1999/2007, p. 514).

Fictional and scientific predictions of the development of robots have persistently overestimated the imminent arrival of self-aware and autonomous beings, however. Winfield points out that perhaps the biggest challenge to the invention of convincing humanoid or android robots is not the mechanical or visual simulation of human appearance and behavior, but rather that of human intelligence. The dominant paradigm in AI research as top-down symbol-processing has proved stubbornly resistant to the simulation of even insect intelligence, let alone human conversation and reasoning, and Moravec’s time line of robot evolution, drawn up in the late 1980s, has already proved significantly optimistic in its predictions of development in both robot movement and robot intelligence. AI creations might be able to beat most human players in the tightly controlled symbolic environment of the chess game, but any degree of autonomous decision-making and behavior in a dynamic and complex environment is a long way off.

Robots are ubiquitous in the contemporary world, but—a few exciting examples apart—they are generally the mundane machines of the assembly line, or domestic products such as autonomous vacuum cleaners. There is significant research into the possibility of robots’ performing precise and repetitive tasks in medical and care settings, and drones are just one product of extensive research, development, and investment in the military use of robotics and related autonomous sensing systems. And, perhaps because they are furthest from the “humanlike” in appearance and behavior, the multiple entities of “swarm” robotics have received less popular attention than humanlike or animal-like machines. Modeled on insect sociality, influenced by artificial life research on cellular automatons, and driven by the practical challenges of operating autonomous devices in complex environments, swarms operate through simple rules of behavior that, when implemented by a dynamic group, facilitate the emergence of complex and responsive actions. As in the relationship between the ant and its colony, the capabilities and “intelligence” of a swarm greatly exceed those of any individual.

Actual robots are making inroads into everyday and popular entertainment culture. Toys such as Furby and Aibo have been available for decades, and systems such as LEGO Mindstorms and NXT offer sophisticated robotic systems for experimentation and play. Clockwork tin robot toys have been popular since the 1950s, they are animated but not autonomous, whereas actual robot toys offer varying degrees of autonomy, programming, sensing, and even a capacity for learning.

Though robots tend to be thought of as the combination of a mechanical body and a software control system, there is no meaningful distinction between them and software automatons. Robotics research is conducted through software simulation as well as through engineering, and everyday communication and entertainment are increasingly mediated by software agents, often directly understood as robots (“bots”) (Wise, 2012). From automated online interactions (“chatbots”) and other intelligent agents to the nonplayer characters of computer games, media communications today happen increasingly between human and robot as much as they do between human and human.